The reality of AI writing

Writing tools have moved past simple spellcheckers. Now, platforms like The Good AI have millions of users generating full drafts to get past writer's block. This changes how we think about authorship.

The increasing prevalence of AI assistance in academic writing is creating both excitement and anxiety. Students are exploring ways to leverage these tools to improve their work, but educators are grappling with questions of originality, authorship, and academic integrity. The ease with which AI can produce text raises concerns about plagiarism and the devaluation of critical thinking skills.

Currently, one of the biggest challenges is the lack of universally accepted guidelines for citing AI-generated content. Existing citation styles – MLA, APA, Chicago – were not designed to accommodate AI as a source. This ambiguity leaves students and researchers unsure of how to properly attribute the use of these tools, leading to potential ethical and academic consequences. It’s a bit of a Wild West right now, frankly.

I believe acknowledging these ethical concerns upfront is vital. While AI offers incredible potential, ignoring the potential for misuse or the need for clear standards would be a disservice to both students and the academic community. The conversation needs to be proactive, not reactive.

Purdue OWL standards

The Purdue OWL (Online Writing Lab) is often considered the gold standard for essay formatting and citation guidance. As of late 2024 and early 2025, their guidance on citing AI is evolving, but they currently recommend treating AI as an author. This means including the AI model’s name (if known) in the author position of your citation.

However, this approach isn’t without its complexities. What do you do when the AI tool doesn’t have a clearly defined "author"? Many models are developed by large teams or organizations, making individual attribution difficult. Furthermore, how do you account for multiple iterations and prompts? If you refine your prompts several times to achieve a desired result, which version of the prompt should you cite?

Purdue OWL suggests providing as much detail as possible about the AI tool used, including the version number and the date you accessed it. They also recommend including a brief description of your prompts in the works cited entry or as a footnote. This allows readers to understand how the AI-generated content was created and to assess its reliability.

The 'AI as author' model is a strange fit for a machine, but it is the current standard. It forces writers to be honest about where their text came from, even if the machine didn't 'write' it in the human sense.

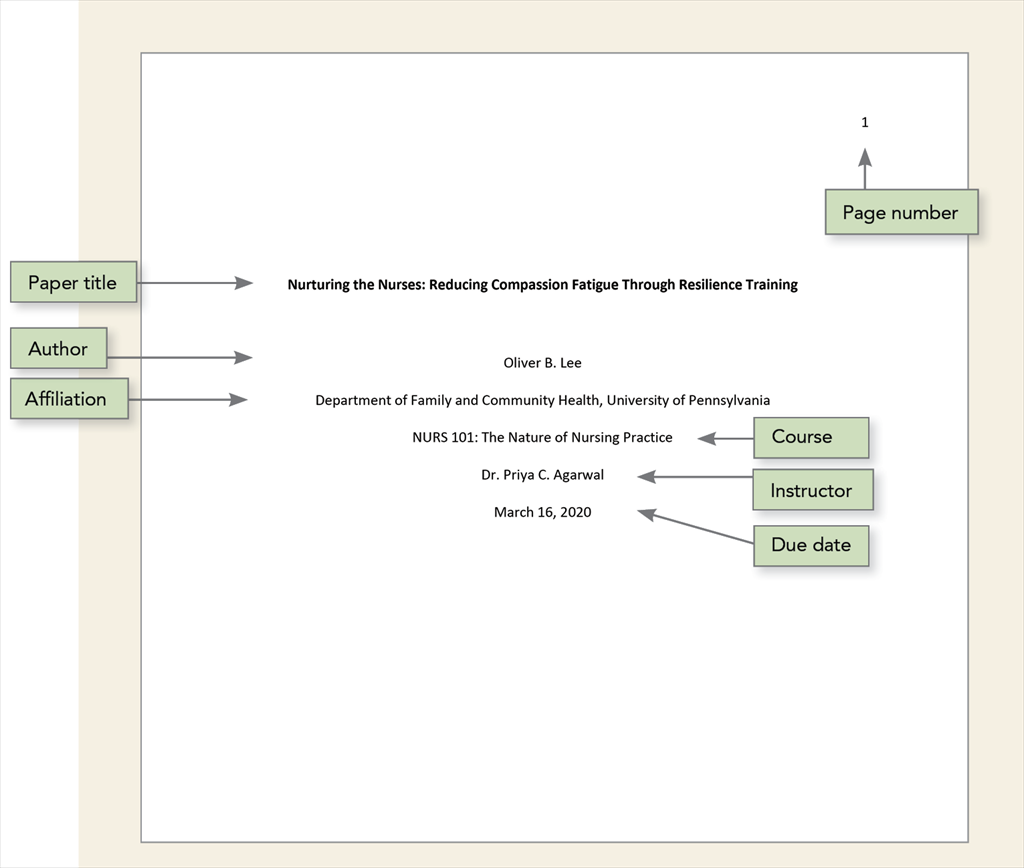

MLA 2026: A Projected Approach

Predicting the future of MLA formatting is always a bit of a guessing game, but based on current trends and Purdue OWL’s guidance, we can project how the 9th edition might evolve to address AI-generated content by 2026. I anticipate a more nuanced approach than simply treating AI as an author.

I expect the MLA will require specific elements in the citation, including the name of the AI model (e.g., GPT-4, Gemini), the version used, the date you accessed the tool, and a detailed description of your prompts. This description will be crucial for understanding the context of the generated text and assessing its originality. Think of it as providing enough information for someone else to replicate your results.

The level of student editing will also be a key factor. If the AI generates a rough draft that is then heavily revised and rewritten by the student, the citation might reflect the AI’s contribution as minimal. However, if the AI generates a substantial portion of the final text with little alteration, the citation will need to be more prominent.

We need to be realistic about the level of editing students will do. It's unlikely they'll simply copy and paste AI-generated text without any modifications. The MLA will likely need to account for this spectrum of AI involvement, perhaps with different citation formats depending on the degree of student contribution. A potential format might look like: Author (if any), “Prompt to AI Model,” AI Model Name (Version), Date Accessed, Description of Prompt.

A step-by-step guide to this projected MLA 2026 format will be critical for students. It will require a shift in thinking about what constitutes a 'source' and how to accurately represent the role of AI in the writing process.

- Name the specific model, like ChatGPT or Gemini.

- Record the specific version of the model.

- Note the date you accessed the AI tool.

- Save your exact prompts in a separate document.

- Assess the level of editing you performed on the AI-generated text.

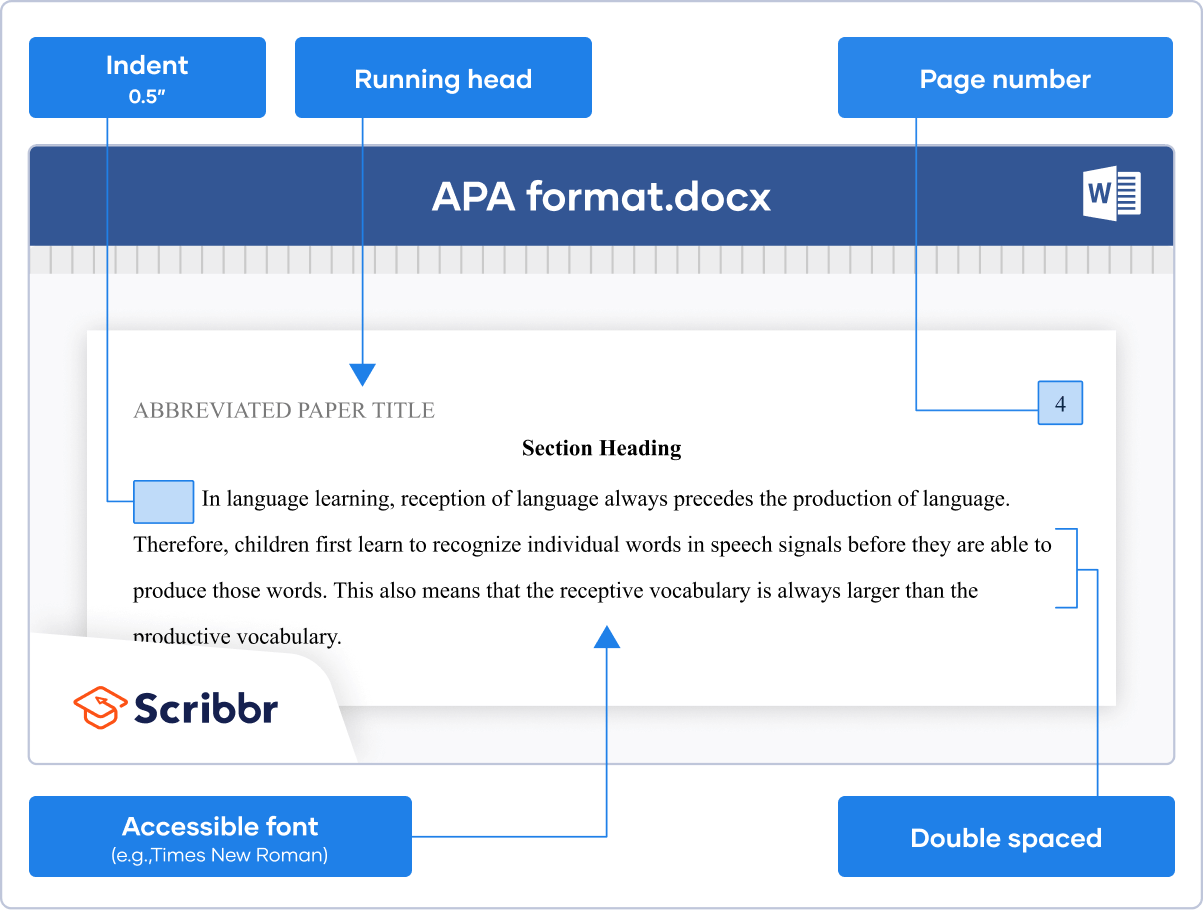

APA format and AI

APA 7th edition currently handles AI in a somewhat indirect manner. The focus is on acknowledging the use of software or tools that assisted in the research process, but it doesn't explicitly address AI-generated text as a source in the same way as MLA. Existing guidelines emphasize the importance of transparency and avoiding plagiarism.

Applying these guidelines to AI-generated text presents challenges. Should AI be listed as an author? APA generally reserves authorship for individuals who have made a significant intellectual contribution to the work. It's debatable whether an AI model meets that criterion. The current recommendation is to acknowledge AI assistance in a note or in the methodology section of the paper.

I project that APA might adapt its approach in 2026, potentially emphasizing transparency in the writing process even more strongly. This could involve requiring researchers to explicitly state how AI was used, what prompts were provided, and how the AI-generated text was evaluated and revised. The focus will likely remain on the researcher’s intellectual contribution.

In research papers, I anticipate APA will encourage researchers to describe their use of AI in the methodology section, similar to how they would describe other research tools or techniques. This would provide readers with a clear understanding of how AI influenced the research process and allow them to assess the validity of the findings.

Is prompt engineering authorship?

A deeper dive into the idea of prompt engineering is necessary. If the quality of the AI-generated text is heavily dependent on the prompts provided, should the prompter be considered an author? This is a complex question with no easy answers. A well-crafted prompt can elicit a nuanced and insightful response from an AI model, while a poorly worded prompt can produce irrelevant or nonsensical text.

The ethical implications of claiming authorship for AI-assisted work are significant. If a student simply provides a basic prompt and then submits the AI-generated text as their own, that raises serious concerns about academic integrity. However, if a student spends considerable time refining their prompts, iteratively improving the results, and then substantially editing the AI-generated text, the case for recognizing their intellectual contribution is stronger.

The concept of "intellectual contribution" is central to this debate. Traditionally, authorship has been associated with original thought, critical analysis, and creative expression. Does prompt engineering qualify as intellectual contribution? It requires skill, knowledge, and creativity to craft effective prompts, but it's different from traditional forms of authorship.

This is a tricky area, and I think it will be debated for a long time. There is no consensus on where to draw the line between AI assistance and human authorship. What’s clear is that simply using an AI tool doesn’t automatically entitle someone to claim authorship of the resulting text.

Detecting and Disclosing AI Usage

The limitations of AI detection tools are significant. While these tools can sometimes identify AI-generated text, they are not foolproof and can often produce false positives. Relying solely on AI detection tools to identify plagiarism is unreliable and can lead to unfair accusations.

Emphasizing the importance of honest disclosure of AI assistance is crucial. Students should be encouraged to openly acknowledge when and how they used AI in their work. This demonstrates academic integrity and allows educators to assess the student’s understanding of the material.

The potential consequences of academic dishonesty related to AI are severe, ranging from failing grades to expulsion. Students need to understand that submitting AI-generated text as their own without proper attribution is a form of plagiarism.

I think the role of educators is to foster responsible AI use, not just try to ban it. This involves teaching students how to use AI ethically, how to critically evaluate AI-generated content, and how to properly cite their sources. It's about equipping them with the skills they need to navigate this new landscape.

No comments yet. Be the first to share your thoughts!