The Shifting Landscape of Academic Honesty: AI's Arrival

AI writing tools are shaking the foundations of academic integrity. Educators are grappling with technology that challenges assumptions about authorship. Students are also navigating this change, facing both temptation and anxiety.

Historically, plagiarism has centered on the unauthorized copying of existing work – a relatively straightforward concept to understand and detect. But AI presents a different beast. It doesn’t simply copy; it generates new text, making traditional plagiarism detection methods less effective. This isn't just about students finding ways to cheat; it's about a fundamental change in how writing is being produced and assessed.

I believe the conversation needs to move beyond framing this solely as a matter of academic dishonesty. While misuse is a concern, the technology itself isn't inherently unethical. The challenge lies in adapting our understanding of authorship, learning, and assessment in a world where AI can produce remarkably coherent and seemingly original text. It's a complex situation, and easy answers are scarce.

What AI Writing Tools *Actually* Do: Beyond 'Essay Generation'

The term 'AI essay writer' conjures images of a program churning out complete, polished essays with minimal human input. The reality is more nuanced. These tools are powered by large language models (LLMs) – complex algorithms trained on massive datasets of text and code. They excel at predicting the next word in a sequence, allowing them to generate human-like text.

There is a difference between these tools. EduWriter.ai has services for essay generation and plagiarism checking. Yomu.ai focuses on citation formatting. These are collections of tools using LLMs for specific tasks.

Yomu.ai is a solution for citation accuracy. While they claim to format citations correctly, testing shows inconsistencies. A 2024 review found issues with APA style when handling multiple authors. EduWriter.ai also offers citation features, but user reports suggest similar limitations. The tools are improving, but they aren’t flawless.

The core limitation is that LLMs lack true understanding. They can mimic style and structure, but they don’t possess the critical thinking skills necessary for original research or nuanced analysis. They are excellent at synthesizing existing information, but not at creating new knowledge.

Qualitative Comparison of AI Writing Tools

| Feature | EduWriter.ai | Yomu.ai |

|---|---|---|

| Citation Accuracy | Fair - Requires careful review | Fair - Similar level of review needed |

| Paraphrasing Quality | Good - Generally produces varied phrasing | Fair - Can sometimes be repetitive |

| Plagiarism Detection | Includes built-in tool, but external verification recommended | Offers plagiarism check as part of service |

| Style Options | Offers APA and MLA generators | Supports multiple styles, including APA and MLA |

| Ease of Use | Straightforward interface, simple prompts | User-friendly, focused on essay building |

| Advanced Features | Offers 'Advanced Usage' with premium subscription | Highlights formatting capabilities |

| Human Editing Support | Offers discount on human editors with premium | Not explicitly mentioned |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

➡️ ESSAY writing Tips #Revenue_Talati2025#STI #GPSC

— HeeR PandyA 💫 (@HeerAmbitious) May 30, 2025

"PESTLE" Approach

1. Introduction

2. Body part

P - Political

E - Economical

S - Social

T - Technological

L - Legal

E - Environmental

3. Support your argument with Data/Facts

4. conclusion

Current Detection Methods: How Reliable Are They, Really?

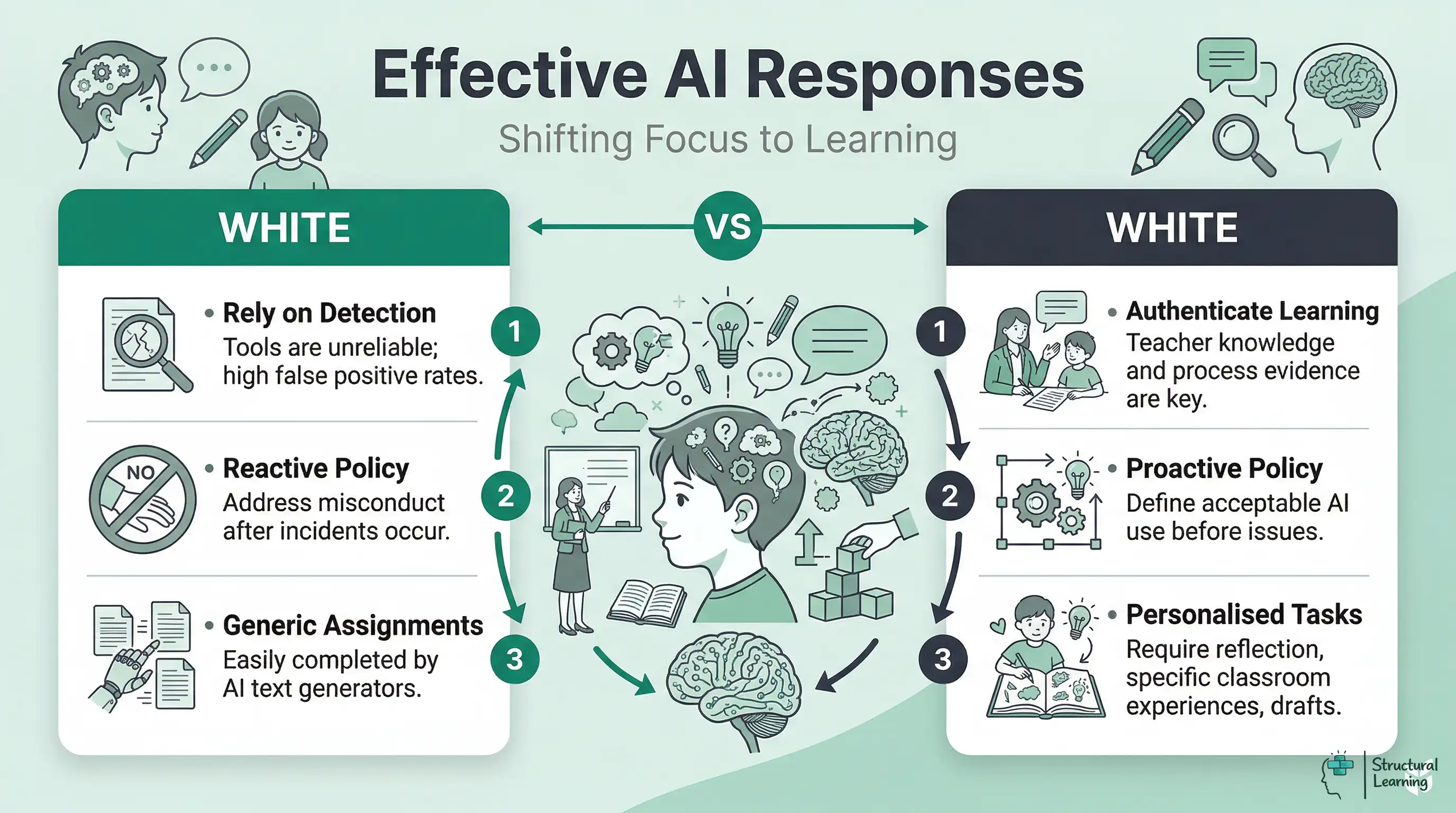

Universities are scrambling to adopt methods for detecting AI-generated text. The most prominent approach involves AI detection software like Turnitin’s AI writing detection feature and GPTZero. These tools analyze text for patterns characteristic of AI-generated content – things like predictable phrasing, unusual sentence structure, and a lack of stylistic variation.

However, the accuracy of these tools is a major concern. Studies have shown significant false positive rates, meaning that human-written text can be incorrectly flagged as AI-generated. GPTZero, for instance, has been known to misidentify original work, particularly from non-native English speakers. This raises serious ethical questions about the potential for unfair accusations.

What’s more, these detection methods are surprisingly easy to circumvent. Even minor edits – rephrasing a sentence, adding a personal anecdote, or changing the tone – can often fool the detectors. It’s an arms race, with AI writing tools constantly evolving to evade detection. Relying solely on these tools is a flawed strategy.

I’m skeptical of relying too heavily on detection software. It feels like a reactive measure, addressing the symptoms rather than the root cause. A more proactive approach involves rethinking assessment methods to prioritize critical thinking and original thought, skills that AI currently struggles to replicate.

Educator Concerns: AI Detection in 2026

- False Positives are Common - Many educators report AI detection tools incorrectly flagging human-written work as AI-generated. This is a significant concern for equitable assessment.

- Limitations of Current Tools - Tools like Turnitin’s AI writing detection feature, while widely used, are acknowledged to have limitations and aren't foolproof. They indicate *potential* AI use, not definitive proof.

- Focus on Writing Process - Educators are increasingly shifting focus from simply *detecting* AI use to assessing the student's writing process – drafts, outlines, and in-class writing exercises.

- AI Detection as a Starting Point - Many instructors view AI detection reports as a signal for further investigation, requiring a conversation with the student and a review of their work, rather than automatic penalties.

- Stylometric Analysis Challenges - Experts note that AI detection often relies on stylometric analysis, which can be fooled by even minor revisions to AI-generated text, or by students adopting AI writing styles themselves.

- Bias in Detection Algorithms - Concerns exist regarding potential biases in AI detection algorithms, potentially disproportionately flagging work from non-native English speakers or students with unique writing styles.

- Need for Pedagogical Adaptation - The rise of AI tools necessitates a re-evaluation of assessment methods and a focus on skills that AI currently struggles with – critical thinking, original analysis, and creative problem-solving.

Formatting Standards in the Age of AI: A 2026 Forecast

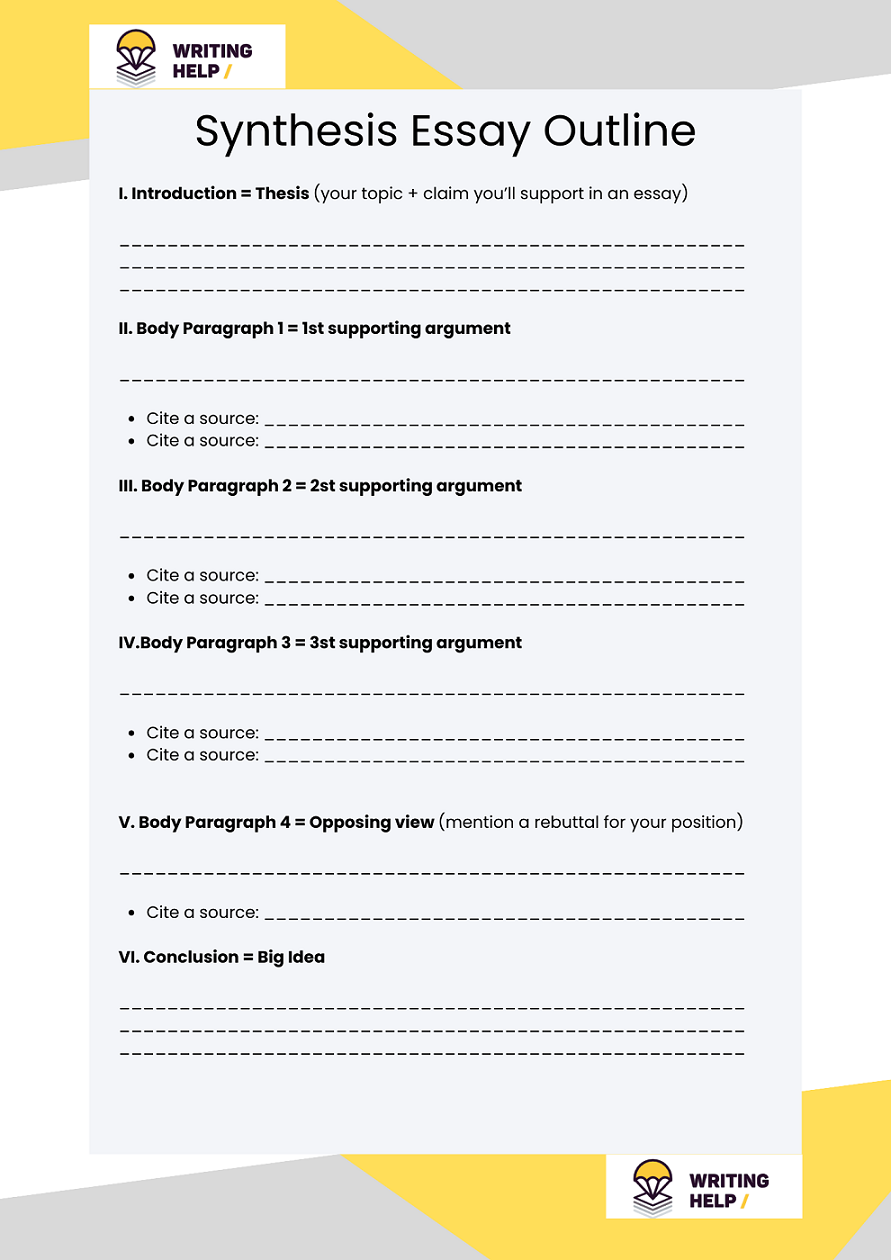

The rise of AI writing tools necessitates a reevaluation of traditional formatting guidelines like MLA, APA, and Chicago. Currently, these styles focus on documenting sources and presenting information clearly. But what happens when the 'source' is an AI? The existing rules simply don’t address this scenario.

I anticipate a move towards requiring students to submit drafts showing the evolution of their work. This would provide instructors with a clearer understanding of the student’s writing process and make it more difficult to submit entirely AI-generated content. A detailed revision history could become a standard component of many assignments.

We may also see a greater emphasis on in-class writing assignments and oral presentations. These formats are more difficult to automate and allow instructors to assess a student’s understanding directly. This might mean a shift away from lengthy research papers and towards more frequent, shorter writing tasks.

Citation styles will undoubtedly need to evolve to acknowledge AI assistance. Currently, there's no consensus on how to cite ChatGPT or other LLMs. But by 2026, I expect to see standardized guidelines for citing AI tools, including details such as the model used, the date accessed, and the specific prompts provided. This will be crucial for maintaining academic integrity and transparency.

Furthermore, institutions might adopt policies requiring students to declare any use of AI tools in their work, similar to acknowledging the use of a research assistant. This declaration wouldn’t necessarily be a mark against the student, but rather a statement of transparency and responsible AI use.

The 'AI as Tool' Argument: When is AI Assistance Acceptable?

The debate isn’t simply about banning AI; it’s about defining its appropriate role in the academic process. AI can be a valuable tool for brainstorming ideas, outlining arguments, and improving grammar and style. It can assist with tasks like summarizing research papers or identifying potential sources.

However, the line is crossed when AI is used to generate substantial portions of the work without proper attribution. Submitting AI-generated text as one’s own is, fundamentally, a form of plagiarism. The key distinction lies between using AI to support the writing process and using AI to replace the writer.

I believe that some level of AI assistance is inevitable and potentially beneficial. It can free up students to focus on higher-order thinking skills, such as critical analysis and creative problem-solving. But transparency is paramount. Students should be encouraged to acknowledge the use of AI tools and explain how they were used.

A framework for responsible AI use might include guidelines on the permissible level of AI assistance, the requirement for clear attribution, and a focus on developing skills that A

- Brainstorming: Using AI to generate potential topics or arguments.

- Outlining: Utilizing AI to create a structured outline for an essay.

- Grammar and Style Checking: Employing AI tools to identify and correct errors.

- Summarization: Using AI to condense lengthy research papers.

- Citation Assistance: Leveraging AI to format citations (with careful verification).

Navigating Citation: Acknowledging AI's Role in Your Work

Citing AI tools is a rapidly evolving area, and definitive guidelines are still emerging. However, the underlying principle remains the same: transparency and attribution. You must acknowledge the source of any ideas or text that were generated by AI.

the AI tool as a personal communication. In MLA format, you might cite it as a conversation or interview. In APA format, you could cite it as unpublished personal communication.

The citation should include the name of the tool (e.g., ChatGPT), the version used (if available), the date accessed, and the specific prompts used to generate the text. This allows readers to understand how the AI was used and to assess the validity of the information.

For example, an MLA citation might look like this: OpenAI. ChatGPT (3.5). 15 Mar. 2024. Prompt: “Summarize the main arguments in Foucault’s Discipline and Punish.” It's crucial to check with your instructor for their specific preferences, as guidelines are still being developed.

Future-Proofing Your Academic Skills: Beyond Avoiding Detection

The focus shouldn’t be on "beating the AI" or avoiding detection. Instead, students should prioritize developing skills that will remain valuable even as AI writing tools become more sophisticated. These include critical thinking, original research, nuanced analysis, and effective communication.

AI can generate text, but it cannot replicate the ability to think critically, to synthesize information from multiple sources, or to develop a unique perspective. The ability to formulate original arguments and to support them with evidence will be more important than ever.

Developing a strong writing voice is also crucial. AI-generated text often lacks personality and originality. Cultivating a distinctive writing style will help students stand out and demonstrate their intellectual ownership of their work.

Ultimately, the goal is not to become a better AI prompter, but to become a better thinker and writer. Embrace the challenges presented by AI as an opportunity to hone your skills and to deepen your understanding of the world. Intellectual curiosity and a commitment to lifelong learning will be the most valuable assets in the age of AI.

Content is being updated. Check back soon.

No comments yet. Be the first to share your thoughts!